5 Agentic AI Capabilities That Actually Work in Production

- Naveen Kushalappa

- Mar 9

- 9 min read

Table of Contents:

An AI agent processes insurance claims at scale, autonomously routing decisions across multiple systems. In testing, the system performs as expected. Once in production, issues surface quickly. Within hours, decisions become erratic, audit trails are incomplete, and errors cascade across workflows.

This pattern is increasingly visible across enterprises. Research reveals that 80% of the work required to make AI agents functional involves data engineering, stakeholder alignment, governance, and workflow integration rather than model fine-tuning. The gap between a working prototype and a production-ready system is not about intelligence. It is about infrastructure, accountability, and resilience.

This guide covers the five agentic AI capabilities that consistently deliver results in production environments. It will help enterprise teams understand why so many initiatives stall, what infrastructure and governance foundations are non-negotiable, which capabilities drive measurable outcomes, what risks to plan for, and how to deploy agents safely at scale.

What Makes Agentic AI Ready for Production Environments?

Production readiness is not determined by model accuracy alone. It depends on whether the surrounding system can support reliability, traceability, and controlled autonomy at scale.

Three foundations consistently separate experimental agents from production systems:

Infrastructure built for continuity

Latency expectations depend heavily on the use case:

Fraud scoring and payment flows typically require 10 to 50 millisecond responses

Customer service agents need sub 100 millisecond response times to feel instant

Conversational workflows remain smooth when responses arrive within one second

Many vector search operations must complete in sub-millisecond time

Speed alone is insufficient. Production agents must operate on continuous, streaming data from transaction systems, market feeds, and customer events.

Equally important is failure recovery. When an agent stops mid-task, the system must resume processing without losing state. Mature deployments rely on event-driven, asynchronous architectures rather than direct function chaining, improving resilience across long-running workflows.

Data maturity is the operational backbone

Agent output quality is tightly coupled to data quality. When the underlying data is stale, fragmented, or poorly structured, errors scale quickly.

Before moving to production, teams should confirm:

Data across sources is normalized into consistent, structured formats

Provenance, transformations, and downstream usage are fully traceable

Embedded documents carry source, version, and ingestion timestamps

Vector stores include alerting for outdated or superseded content

Strong data lineage is not just a performance concern. It is also essential for regulatory defensibility.

Named ownership and accountability

Production agentic AI requires explicit human ownership. Every agent must have a defined role and a named owner responsible for its behavior.

Clear ownership enables:

Faster incident response

Defensible audit trails

Controlled autonomy boundaries

Regulatory readiness

If an AI system recommends a high-impact action and the responsible owner cannot be immediately identified, the system is not ready for production. Every recommendation and downstream action must be logged with timestamps and supporting rationale.

The 5 Agentic AI Capabilities That Actually Work in Production

Enterprise teams are not struggling to build agent demos. They are struggling to run agents safely at scale. The difference comes down to five capabilities that consistently separate pilots from production systems.

1. Strategic reasoning and multi-step planning

Production agents must do more than respond. They need to break complex objectives into ordered, multi-step plans that hold up under real-world variability.

This includes:

Decomposing goals into executable tasks

Sequencing actions across systems

Adjusting plans when conditions change

Without strong planning discipline, agents create brittle workflows that collapse the moment inputs vary.

2. Reliable execution with real-time monitoring

Calling tools is easy. Calling them safely and repeatedly under load is what production demands.

High-performing agents:

Validate tool inputs before execution

Monitor outputs against expected goals

Detect malformed or unexpected responses

Trigger retries or escalation when needed

Execution without monitoring creates a silent failure risk, especially in high-volume environments.

3. Adaptive correction using memory and feedback

Production environments are messy. Inputs change, systems return edge cases, and workflows rarely follow the happy path.

Agents that hold up in production continuously self-correct by:

Maintaining persistent task memory

Learning from prior failures

Re-planning when intermediate steps fail

Preserving context across long-running workflows

This capability prevents small errors from compounding into system-level incidents.

4. Deep system integration across enterprise platforms

Standalone agents create isolated value. Production agents operate as connective tissue across the enterprise stack.

Real impact comes when agents can coordinate work across:

ERP systems

HRIS platforms

ITSM workflows

CRM environments

Internal knowledge bases

Enterprises see measurable cycle-time reduction only when agents can move work end-to-end across these boundaries.

5. Built-in governance, observability, and human control

This is where most initiatives fall apart.

Production-ready agentic systems require:

Named ownership for every agent

Full audit trails of decisions and actions

Role-based access with least-privilege enforcement

Real-time behavioural monitoring

Human approval checkpoints for sensitive actions

Kill switch mechanisms for rapid containment

Without these controls, autonomy quickly becomes operational risk.

Enterprises that treat governance as a core capability, not an afterthought, are the ones successfully moving agentic AI from controlled pilots into regulated, high-stakes production environments

Why Do Many Agentic AI Initiatives Fail to Scale?

Most agentic AI projects do not fail because the model is insufficient. They fail because the systems around the model are not built to hold up under real conditions.

Evaluation Methods That Miss the Real Failure Points

Traditional LLM evaluation treats agent systems as black boxes, measuring only the outcome. This misses critical failure points across the entire reasoning chain. Production-grade agents must handle a wide range of failure scenarios consistently:

Invalid tool invocations and malformed parameters

Unexpected tool response formats and authentication failures

Memory retrieval errors and mid-task context loss

Inappropriate planning outputs from the underlying reasoning model

Without a systematic assessment of how agents detect and recover from these failures, enterprises cannot maintain coherence in user interactions after exceptions occur.

Architectural Gaps That Compound Over Time

Production readiness demands a modular architecture where components can fail without collapsing the entire system. Many initiatives scale poorly because foundational design decisions are made too late. Key principles that must be in place from the start include:

A poly cloud, poly AI architecture to enable regulatory-compliant guardrails across vendors

An orchestration layer built to support retries, recovery, and human approval steps

Persistent state storage so agents do not lose context on restart

Asynchronous event-based communication rather than direct function calls

Role-based agent profiles with least-privilege access to memory, tools, and data

Fine-grained access control is essential. Granting agents only the minimum permissions required for their tasks reduces the attack surface and ensures compliance with security policies.

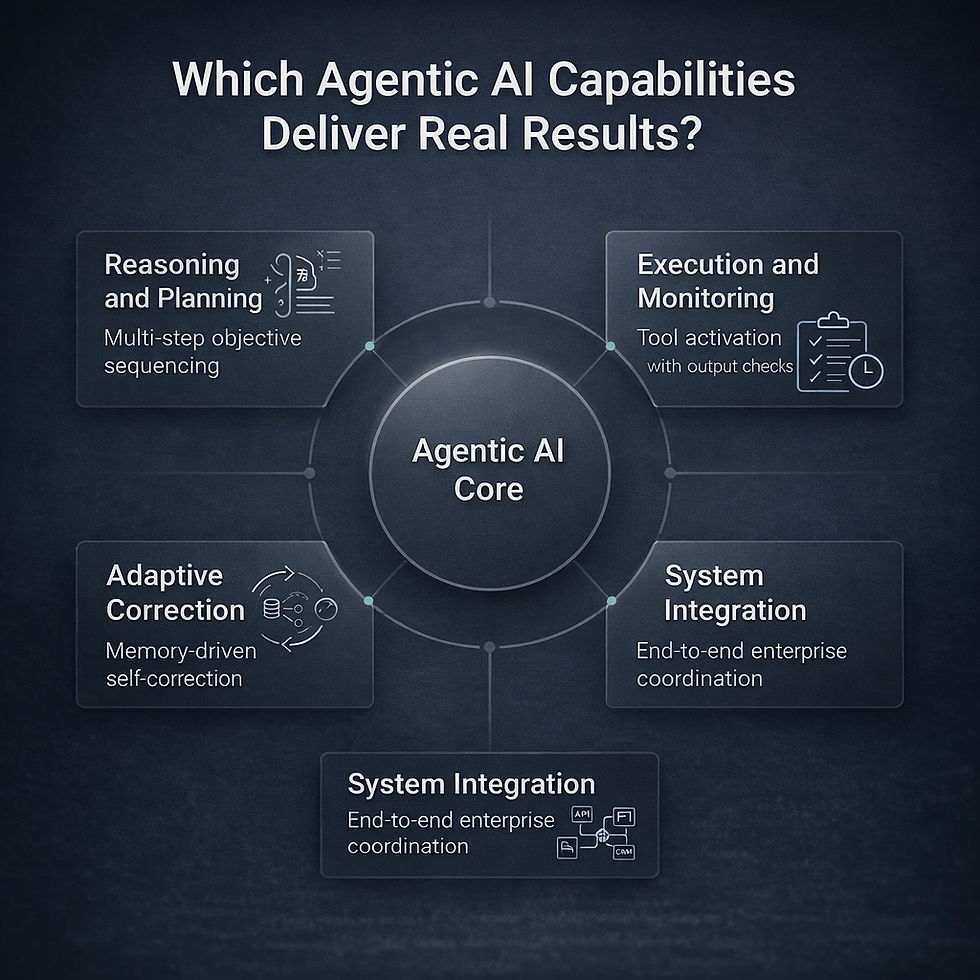

Which Agentic AI Capabilities Deliver Real Results in Production?

AI agents are being used in 78% of organizations in some form, while 85% have adopted agents in at least one process. Yet determining which capabilities deliver measurable outcomes remains unclear for most enterprises.

The Four Pillars of Effective Agentic AI

Four capabilities separate AI agents that work from those that fail:

Reasoning and planning: Breaking large objectives into strategic, multi-step sequences

Execution and monitoring: Activating external tools while checking output against goals

Adaptive correction: Using memory and feedback to self-correct when tasks fail

System integration: Coordinating work across disconnected enterprise platforms like ERP, HRIS, ITSM, and CRMs

Research shows employees spend nearly 40% of their week searching for information. Agentic AI acts as the connective tissue across silos, integrating data, evaluating intent, and executing actions end to end.

How Enterprises Should Evaluate Agentic AI Fit

Before scaling agentic AI, production teams need a clear view of where autonomy genuinely adds value and where it introduces unnecessary risk.

The right starting point is not capability hype. It is workload suitability.

Production teams should evaluate whether the target workflow:

Contains high variability that is difficult to fully script

Requires coordination across multiple systems or data sources

Can tolerate iterative improvement without operational exposure

Has clear ownership, auditability, and rollback paths

Operates on data that is sufficiently structured and reliable

Agentic AI delivers the strongest results in complex, decision-heavy workflows that benefit from dynamic planning and cross-system orchestration.

In contrast, highly standardized, low-variance processes often perform better with deterministic automation. Production success depends less on how advanced the agent is and more on how deliberately the use case is selected.

What Technical and Operational Risks Should Teams Plan For?

Silent Drift and Cascade Failures

Agentic AI systems do not fail suddenly. They drift over time, quietly changing behavior without triggering alerts. A flaw in one agent can cascade across tasks to other agents, amplifying risk across the entire workflow. In autonomous processes, a single misclassification can propagate through downstream systems, corrupting records and breaking entire pipelines before anyone notices.

Security Vulnerabilities Unique to Agentic Systems

The fundamental security weakness of large language models creates a problem most enterprises underestimate: there is no rigorous way to separate instructions from data. This opens the door to prompt injection attacks, where hidden commands embedded in content the agent processes cause it to leak sensitive information or take unauthorized actions.

Additional threats include cross-agent task escalation, synthetic identity spoofing, and untraceable data leakage between agents operating without audit oversight. Organizations face the greatest exposure when sensitive data, untrusted content, and external communication are all active simultaneously.

Governance Gaps That Create Compliance Exposure

Just 18% of organizations have a governance body with authority to make decisions about responsible AI. Without clear accountability structures, a non-auditable agent provides no proof that its actions complied with regulations like GDPR, HIPAA, or relevant financial regulations. Every autonomous workflow needs defined ownership, audit trails, and escalation paths built in from day one.

How Can Enterprises Deploy Agentic AI Safely and Reliably?

1. A Structured 90-Day Deployment Path

Organizations following structured deployment frameworks move from concept to enterprise-wide deployment in approximately 90 days:

Days 1 to 30: Select one high-volume, low-risk workflow, define KPIs including time saved and accuracy uplift, and run the agent in a sandboxed environment with least-privilege access

Days 31 to 60: Roll out stakeholder training, integrate SSO and audit logging, and communicate progress with regular updates across teams

Days 61 to 90: Measure ROI, run model drift checks and prompt analytics, and expand to two adjacent departments

2. Maintaining Control Through Continuous Monitoring

Deployment marks the start of governance, not the end. Implement continuous monitoring through dashboards that track behavioral anomalies, bias, and security violations in real time. A robust evaluation framework must operate across three layers:

Final output quality, including task completion, response accuracy, and safety

Individual component performance covering tool use, memory, and multi-turn handling

Underlying model behavior tracking, reasoning quality, and responsibility metrics

Build kill switch capabilities for immediate containment of rogue behavior. Design systems to automatically reduce autonomy levels when security events are detected, allowing operations to continue safely while human operators investigate.

Industry research predicts 33% of enterprise applications will include agentic AI by 2028, up from less than 1% in recent years. Full deployment remains stagnant at 11%, and the gap exists not in model capabilities but in governance, infrastructure, and operational readiness. Organizations that wait for perfect solutions will find themselves outpaced by competitors deploying functional agents today.

Conclusion

Agentic AI is moving quickly from controlled pilots into real enterprise environments. The constraint is no longer model's capability. It is a production discipline. Teams that succeed treat agents as operational systems, not experimental features. That means building for continuity, enforcing data rigor, assigning clear ownership, and maintaining tight governance from the start. When these foundations are in place, agentic AI can coordinate work across complex environments with measurable impact.

The next phase of adoption will not be led by the most advanced models. It will be led by organizations that deploy agents deliberately, monitor them continuously, and keep humans firmly in the loop where risk demands it.

Partner with Trika Technologies to operationalize agentic AI inside your enterprise stack. From integration and orchestration to governance and observability, Trika helps teams deploy production-ready agents that perform reliably in high-volume, regulated environments. Enterprises that take this disciplined approach now are the ones most likely to scale agentic AI safely and extract sustained value from it.

Frequently Asked Questions

Q1. What percentage of deployment work involves infrastructure rather than model tuning?

Approximately 80%. Data engineering, stakeholder alignment, governance, and workflow integration dominate the effort, with only 20% focused on model fine-tuning or prompt engineering.

Q2. What latency do production AI agents need to meet?

Fraud scoring requires 10 to 50 milliseconds. Customer service agents need under 100 milliseconds to feel instantaneous. Many workloads also require sub-millisecond vector search latency.

Q3. Why are prompt injection attacks so difficult to solve?

There is no rigorous way to separate instructions from data in large language models, meaning anything an agent reads can potentially be treated as a command. This allows malicious actors to embed hidden instructions in content the agent processes.

Q4. What governance is required before deploying agentic AI?

A cross-functional governance committee, agent identity mapping with least-privilege access, clear rules of engagement defining autonomous boundaries, and comprehensive audit trails for regulatory compliance.

Q5. How long does structured deployment take?

Approximately 90 days: 30 days for sandboxed proof of concept, 30 days for integration and change management rollout, and a final phase measuring ROI and expanding to adjacent departments.

Comments